What is Subtitling in Film-Making?

Subtitling is the process of translating the spoken dialogue into written text on the screen. It is a type of audiovisual translation, with its own set of rules and guidelines.

What Are The Most Common Universal Specifications, Of A Good Subtitle?

- Ideally, a subtitle should have a minimum duration of a second and a maximum duration of 6 or 7 seconds on screen.

- The subtitles should appear as the characters start speaking and should disappear when they stop so that they are synchronized with the audio.

- Subtitles should be attuned with the audio, in such a way that they sound natural and fluent, so much so that the viewer is undisturbed by the subtitles and almost unaware that they are even reading.

- Montreal says, “The eye reads slower than the ear hears”; so while translating, the subtitler must condense the dialogue spoken on screen.

- The space which we have in our translation is limited to 2 lines of subtitles which are usually placed and generally centered at the bottom of the screen.

- Each line cannot contain more than 35 characters (i.e. any letter, symbol or space). The subtitle (formed by 2 lines) can have up to 70 characters.

- In terms of the limits of time, a subtitle has a minimum duration of a second and a maximum duration of 6 seconds on screen. But, there is a direct relationship between the duration of a subtitle and the number of characters that it can contain so that it can be read.

- These parameters are based on an average reading speed. We cannot read the same amount of text if we have 6 seconds or less. It is estimated that the current average reading speed is 3 words a second. So to read a complete subtitle of 2 lines and 70 characters, we will need at least 4 seconds, which house some 12 words. If we have less time, we must calculate fewer characters.

What Standards Should Good Subtitles Meet?

- They can either be a form of written translation of a dialog in a foreign language, or a written rendering of the dialog in the same language, with or without added information to help viewers who are deaf or hard of hearing to follow the dialog or people who cannot understand the spoken dialogue or who have accent recognition problems.

- Good subtitles convey to the viewer as much of the experience of watching with sound as possible. The text needs to be readable, match the dialogue as closely as possible, be well-timed and not obscure important parts of the video.

- Achieving all of this at the same time isn’t always possible, so the subtitler needs to make an editorial decision about the best balance. Here are some things to consider:

Choosing the best font-style for subtitles:

When choosing the font subtitles, pick a color other than white, and use an outline. Subtitles are traditionally black or white, but this is just because films used to be black and white. There’s no real reason that they can’t be a different color, preferably one that stands out or is uncommon in nature, like yellow

For choosing the best font for subtitles, I’d like to recommend using:

- Helvetica: it has distinct vertical and horizontal lines you can use against the background.

- Arial: this subtitle font is very popular nowadays, and it is the forefront of our lives.

- Verdana: it looks clean on film or video.

What Is The Technical Process Of Subtitling?

Subtitles may be provided in the source language of the video only, or in one or several other languages, together with time-codes indicating the exact time at which a subtitle appears and how long it remains on screen. The process of subtitling consists of the following phases:

- Spotting: The process of defining the in and out times of individual subtitles so that they are synchronized with the audio, and adhere to the minimum and maximum duration times, taking the shot changes into consideration.

- Translation: Translating from the source language, localizing and adapting it while accommodating the characters permitted according to the criteria.

- Correction: sentence structure, comprehension and overall flow of dialogue. The text must be a natural text, which flows with the same punctuation, spelling rules, and language conventions. The subtitles must be split so that the viewers can easily understand them. Above all, they must not distract the viewer. Some of the basic principle criteria are punctuation, line breaks, hyphens, ellipsis, and italics.

- Simulation: After spotting, translation, and correction, the film must be reviewed in a simulation session: screening with the subtitles on the video screen just as they will appear on the final product. Modifications of text and timing can be made during the simulation.

What Is The Importance Of Subtitling In Film?

- SDH (Subtitles for the Deaf and Hard of hearing) is vital for people who are deaf and struggle with hearing. Subtitles provide them with access to important information as well as means of entertainment.

- Subtitles are used for movies and TV shows so that a wider audience can appreciate and enjoy them. Viewers can understand the dialogue and relate to it better in their own language.

- Sometimes, a movie or TV show may have some dialogue in a foreign language. Subtitling such movies can help the viewer understand the context better.

What Are Some Examples Of Subtitle Script Types?

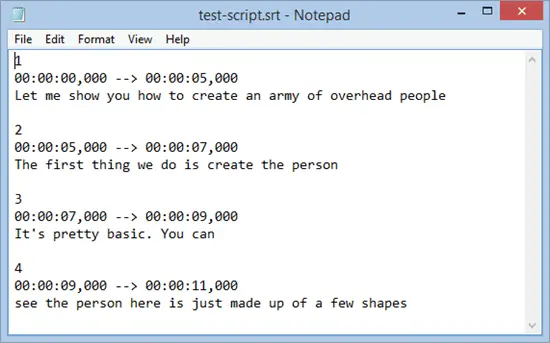

SubRip

- The most common and basic of all subtitle formats is the SubRip caption format also referred to as the .srt caption format. Most online video sites accept .srt captions including and not limited to YouTube, Vimeo, Wistia, etc. Here is an example of a .srt caption file.

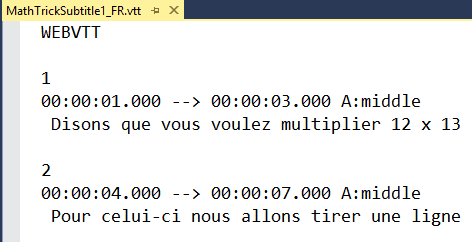

WebVTT

- WebVTT captions or .vtt caption format is recommended on Vimeo and for most HTML 5 players. WebVTT captions are similar to .srt captions with only minor differences. The captions have to start with an introductory line – WEBVTT.

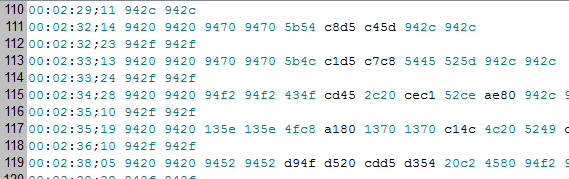

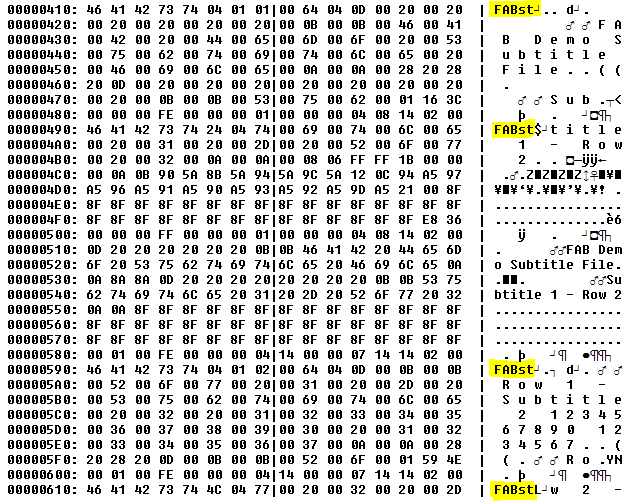

Scenarist Closed Captions

- The Scenarist Closed Captions normally abbreviated as .scc is another common format. It is a recommended caption format for Amazon Direct, a new video service from Amazon. .scc caption formats are also used for DVD. Below is a screenshot sample of this caption format.

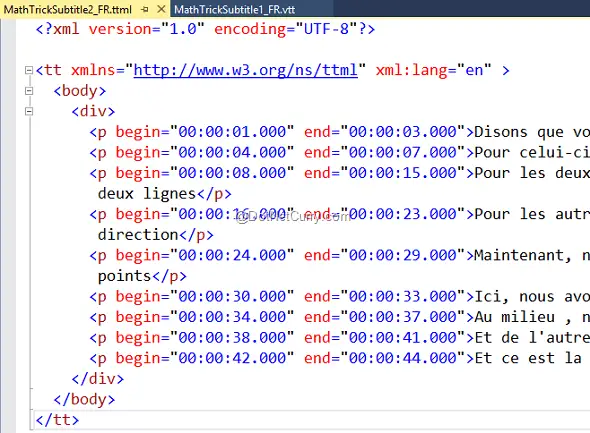

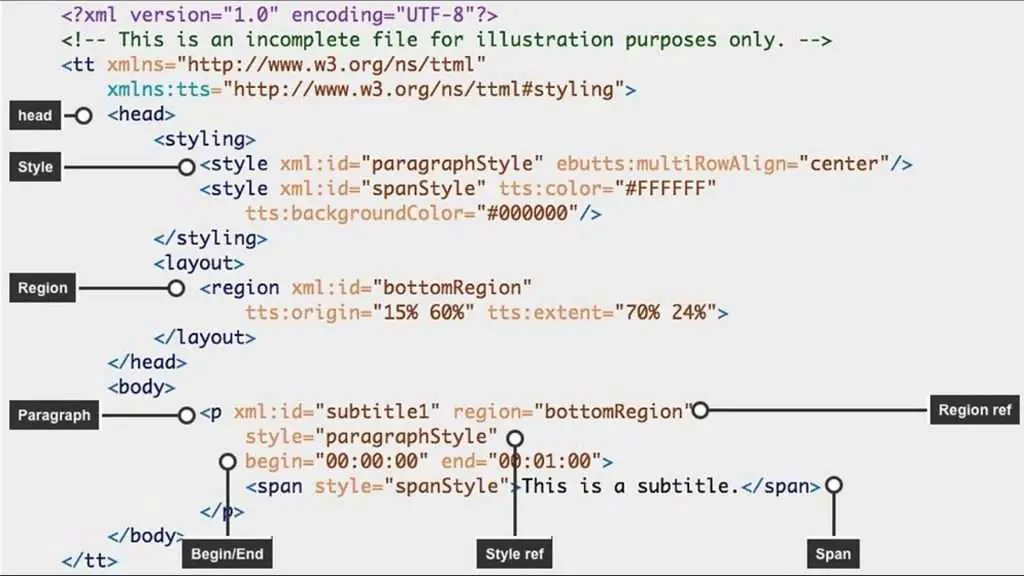

Timed Text Markup Language

- TTML. TTML stands for Timed Text Markup Language. It’s a general-purpose format for describing how the text changes with time. It’s based on XML and is a bit like HTML.

STL

- STL. A widely used legacy format designed for broadcast. It’s a binary format so not easily editable by a human. It has limited styling and positioning options

Internet Media Subtitles and Captions

- IMSC. IMSC stands for Internet Media Subtitles and Captions. It’s a profile (subset) of TTML specifically for subtitles and is being adopted worldwide.

What Is The Process Of Creation, Delivery, And Display Of Finished Subtitles?

- The finished subtitle file is used to add the subtitles to the picture, either: directly into the picture (open subtitles); embedded in the vertical interval and later superimposed on the picture by the end-user with the help of an external decoder or a decoder built into the TV, or converted (rendered) to tiff or BMP graphics that are later superimposed on the picture by the end user’s equipment. Subtitles can also be created by individuals using freely available subtitle-creation software.

- Some programs and online software allow automatic captions, mainly using speech-to-text features. For example, on YouTube, automatic captions are available in English, Dutch, French, German, Italian, Japanese, Korean, Portuguese, Russian, and Spanish. If automatic captions are available for the language, they will automatically be published on the video.

Some Important Points To Note About Subtitling

- It is important to understand that subtitling is not related to closed captioning, which is the text of a video aimed toward the audience of the deaf and hard of hearing. Closed Captioning is more specific in nature than subtitling because it includes references as to who is speaking and relevant sounds such as a doorbell, dog barking, or music being played. These are generally displayed in a black box near the bottom of the screen appearing a second or two after the spoken words. In contrast, subtitles are targeted toward individuals who can hear but may not be able to understand what is being said, either because the dialogue is garbled and unable to be understood, contains a dialogue in a foreign language, or is being targeted toward a foreign audience. For all of these reasons, it is of vital importance that the transcription and/or the translation be precise and accurate. Otherwise, the subtitles will not properly reflect the dialogue of the video, and the message that the video is trying to convey will not be understood by the target audience.

- Notably. Subtitles, as the name suggests, are usually placed at the bottom of the screen. Captions, on the other hand, may be placed in different locations on the screen in order to make clear to the audience who is speaking. This is especially useful for deaf individuals who can’t rely on voice distinctions to pinpoint the speaker. Subtitles and captions have some of the same hurdles to overcome, such as the vocabulary and reading skills of the target audience (see challenges faced during subtitling).

Conclusion

- That said, the primary goal of captions and subtitles is expanding audiences. By adding appropriate subtitles or captions to a company’s YouTube channel, for instance, audiences that would otherwise not be able to fully comprehend the videos, whether because of a linguistic barrier or hearing impairment, can then enjoy them. This means a larger audience — and better business. For example, foreign language films that include subtitles in multiple languages have been able to break into global markets, and top foreign films sometimes achieve high honors in Hollywood. Without subtitles, such films would have had great difficulty gaining such vast popularity (and making so much money!). By expanding the audience, subtitles and captions can boost business while opening up new cultural horizons to a greater number of people.

Comment (1)

www.xmc.pl

December 18, 2020Majatek niektlrych przekracza wartosc do ktlrej potrafia liczyc. Mysla o zemscie zamiast umysl Cwiczyc…

Comments are closed.